Or why do academic institutions shield predators? Many working scientists, particularly those early in their careers or those oblivious to practical realities, maintain an idealistic view of the scientific enterprise. They see science as driven by curious, passionate, and skeptical scholars, working to build an increasingly accurate and all encompassing understanding of the material world and the various phenomena associated with it, ranging from the origins of the universe and the Earth to the development of the brain and the emergence of consciousness and self-consciousness (1). At the same time, the discipline of science can be difficult to maintain (see PLoS post: The pernicious effects of disrespecting the constraints of science). Scientific research relies on understanding what people have already discovered and established to be true; all too often, exploring the literature associated with a topic can reveal that one’s brilliant and totally novel “first of its kind” or “first to show” observation or idea is only a confirmation or a modest extension of someone else’s previous discovery. That is the nature of the scientific enterprise, and a major reason why significant new discoveries are rare and why graduate students’ Ph.D. theses can take years to complete.

Acting to oppose a rigorous scholarly approach are the real life pressures faced by working scientists: a competitive landscape in which only novel observations get rewarded by research grants and various forms of fame or notoriety in one’s field, including a tenure-track or tenured academic position. Such pressures encourage one to distort the significance or novelty of one’s accomplishments; such exaggerations are tacitly encouraged by the editors of high profile journals (e.g. Nature, Science) who seek to publish “high impact” claims, such as the claim for “Arsenic-life” (see link). As a recent and prosa ic example, consider a paper that claims in its title that “Dietary Restriction and AMPK Increase Lifespan via Mitochondrial Network and Peroxisome Remodeling” (link), without mentioning (in the title) the rather significant fact that the effect was observed in the nematode C. elegans, whose lifespan is typically between 300 to 500 hours and which displays a trait not found in humans (and other vertebrates), namely the ability to assume a highly specialized “dauer” state that can survive hostile environmental conditions for months. Is the work wrong or insignificant? Certainly not, but it is presented to the unwary (through the Harvard Gazette under the title, “In pursuit of healthy aging: Harvard study shows how intermittent fasting and manipulating mitochondrial networks may increase lifespan,” with the clear implication that people, including Harvard alumni, might want to consider the adequacy of their retirement investments

ic example, consider a paper that claims in its title that “Dietary Restriction and AMPK Increase Lifespan via Mitochondrial Network and Peroxisome Remodeling” (link), without mentioning (in the title) the rather significant fact that the effect was observed in the nematode C. elegans, whose lifespan is typically between 300 to 500 hours and which displays a trait not found in humans (and other vertebrates), namely the ability to assume a highly specialized “dauer” state that can survive hostile environmental conditions for months. Is the work wrong or insignificant? Certainly not, but it is presented to the unwary (through the Harvard Gazette under the title, “In pursuit of healthy aging: Harvard study shows how intermittent fasting and manipulating mitochondrial networks may increase lifespan,” with the clear implication that people, including Harvard alumni, might want to consider the adequacy of their retirement investments

Such pleas for attention are generally quickly placed in context and their significance evaluated, at least within the scientific community – although many go on to stimulate the economic health of the nutritional supplement industry. Lower level claims often go unchallenged, just part of the incessant buzz associated with pleas for attention in our excessively distracted society (see link). Given the reward structure of the modern scientific enterprise, the proliferation of such claims is not surprising. Even “staid” academics seek attention well beyond the immediate significance of their (tax-payer funded) observations. Unfortunately, the explosively expanding size of the scientific enterprise makes policing such transgressions (generally through peer review or replication) difficult or impossible, at least in the short term.

The hype and exaggeration associated with some scientific claims for attention are not the most distressing aspect of the quest for “reputation.” Rather, there are growing number of revelations of academic institutions protecting those guilty of abusing their dependent colleagues. These reflect how scientific research teams are organized. Most scientific studies involve groups of people working with one another, generating data, testing ideas, and eventually publishing their observations and conclusions, and speculating on their broader implications.

Research groups can vary greatly in size. In some areas, they involve isolated individuals, whether thinkers (theorists) or naturalists, in the mode of Darwin and Wallace. In other cases, these are larger and include senior researchers, post-doctoral fellows, graduate students, technicians, undergraduates, and even high school students. Such research groups can range from the small (2 to 3 people) to the significantly larger (~20-50 people); the largest of such groups are associated mega-projects, such as the human genome project and the Large Hadron Collider-based search for the Higgs boson (see: Physics paper sets record with more than 5,000 authors). A look at this site [link] describing the human genome project reflects two aspects of such mega-science: 1) while many thousands of people were involved [see Initial sequencing and analysis of the human genome], generally only the “big names” are singled out for valorization (e.g., receiving a Nobel Prize). That said, there would be little or no progress without general scientific community that evaluates and extends ideas and observations. In this context, “lead investigators” are charged primarily with securing the funds needed to mobilize such groups, convincing funders that the work is significant; it is members of the group that work out the technical details and enable the project to succeed.

![]() As with many such social groups, there are systems in play that serve to establish the status of the individuals involved – something necessary (apparently) in a system in which individuals compete for jobs, positions, and resources. Generally, one’s status is established through recommendations from others in the field, often the senior member(s) of one’s research group or the (generally small) group of senior scientists who work in t

As with many such social groups, there are systems in play that serve to establish the status of the individuals involved – something necessary (apparently) in a system in which individuals compete for jobs, positions, and resources. Generally, one’s status is established through recommendations from others in the field, often the senior member(s) of one’s research group or the (generally small) group of senior scientists who work in t he same or a closely related area. The importance of professional status is particularly critical in academia, where the number of senior (e.g. tenured or tenure-track professorships) is limited. The result is a system that is increasingly susceptible to the formation of clubs, membership in which is often determined by who knows who, rather than who has done what (see Steve McKnight’s “The curse of committees and clubs”). Over time, scientific social status translates into who is considered productive, important, trustworthy, or (using an oft-misused term) brilliant. Achieving status can mean putting up with abusive and unwanted behaviors (particularly sexual). Examples of this behavior have recently been emerging with increasing frequency (which has been extensively described elsewhere: see Confronting Sexual Harassment in Science; More universities must confront sexual harassment; What’s to be done about the numerous reports of faculty misconduct dating back years and even decades?; Academia needs to confront sexism; and The Trouble With Girls’: The Enduring Sexism in Science).

he same or a closely related area. The importance of professional status is particularly critical in academia, where the number of senior (e.g. tenured or tenure-track professorships) is limited. The result is a system that is increasingly susceptible to the formation of clubs, membership in which is often determined by who knows who, rather than who has done what (see Steve McKnight’s “The curse of committees and clubs”). Over time, scientific social status translates into who is considered productive, important, trustworthy, or (using an oft-misused term) brilliant. Achieving status can mean putting up with abusive and unwanted behaviors (particularly sexual). Examples of this behavior have recently been emerging with increasing frequency (which has been extensively described elsewhere: see Confronting Sexual Harassment in Science; More universities must confront sexual harassment; What’s to be done about the numerous reports of faculty misconduct dating back years and even decades?; Academia needs to confront sexism; and The Trouble With Girls’: The Enduring Sexism in Science).

So why is abusive behavior tolerated? One might argue that this reflects humans’ current and historical obsession with “stars,” pharaohs, kings, and dictators as isolated geniuses who make things work. Perhaps the most visible example of such abused scientists (although there are in fact many others : see History’s Most Overlooked Scientists) is Rosalind Franklin, whose data was essential to solving the structure of double stranded DNA, yet whose contributions were consistently and systematically minimized, a clear example of sexual marginalization. In this light, many is the technician who got an experiment to “work,” leading to their research supervisor’s being awarded the prizes associated with the breakthrough (2).

Amplifying the star effect is the role of research status at the institutional level; an institution’s academic ranking is often based upon the presence of faculty “stars.” Perhaps surprisingly to those outside of academia, an institution’s research status, as reflected in the number of stars on staff, often trumps its educational effectiveness, particularly with undergraduates, that is the people who pay the bulk of the institution’s running costs. In this light, it is not surprising that research stars who display various abusive behavior (often to women) are shielded by institutions from public censure.

So what is to be done? My own modest proposal (to be described in more detail in a later post) is to increase the emphasis on institution’s (and departments within institutions) effectiveness at undergraduate educational success. This would provide a counter-balancing force that could (might?) place research status in a more realistic context.

a footnote or two:

- on the assumption that there is nothing but a material world.

- Although I am no star, I would acknowledge Joe Dent, who worked out the whole-mount immunocytochemical methods that we have used extensively in our work over the years).

- Thanks to Becky for editorial comments as well as a dramatic reading!

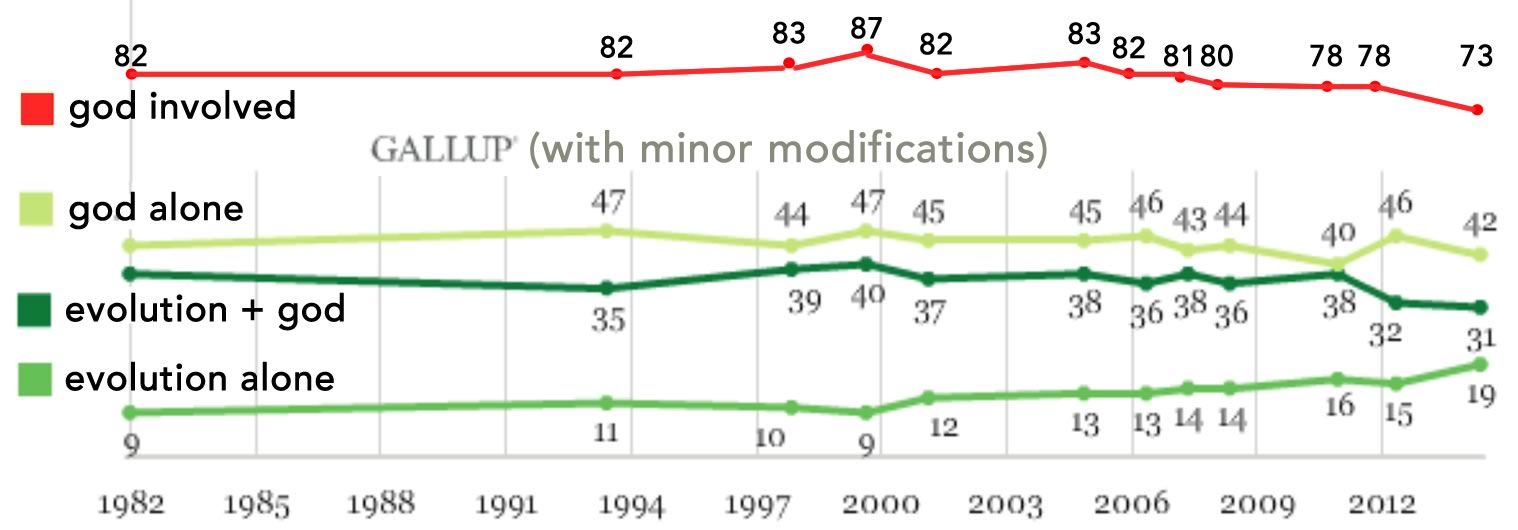

d that is absurdly old and excessively vast. Such arguments echo the view that God had no choice other than whether to create or not; that for all its flaws, evils, and unnecessary suffering this is, as posited by Gottfried Leibniz (1646-1716) and satirized by Voltaire (1694–1778) in his novel Candide, the best of all possible worlds. Yet, as a member of a reasonably liberal, and periodically enlightened, society, we see it as our responsibility to ameliorate such evils, to care for the weak, the sick, and the damaged and to improve human existence; to address prejudice and political manipulations [

d that is absurdly old and excessively vast. Such arguments echo the view that God had no choice other than whether to create or not; that for all its flaws, evils, and unnecessary suffering this is, as posited by Gottfried Leibniz (1646-1716) and satirized by Voltaire (1694–1778) in his novel Candide, the best of all possible worlds. Yet, as a member of a reasonably liberal, and periodically enlightened, society, we see it as our responsibility to ameliorate such evils, to care for the weak, the sick, and the damaged and to improve human existence; to address prejudice and political manipulations [